Background

It is a given that each new processor architecture from Intel features enhancements for workload-specific performance. That quest continues with Broadwell-E, which features a mechanism called AVX Offset. The parent of this mechanism was first seen on server platforms a couple of years ago, although it was not available for user adjustment – server CPUs are usually locked, so no surprises there. The mechanism was introduced because AVX workloads consume a lot more current than ones that use the default instruction set.

The AVX Offset mechanism is designed to work in conjunction with Auto mode for voltage; when an AVX workload is detected, the processor reduces its frequency, which is followed by a reduction in core voltage via the on-die power control unit (PCU). These low-latency, on-the-fly changes, keep the CPU within Intel’s defined TDP limit. Ultimately, this allows higher processor frequencies for non-AVX workloads, which has obvious performance benefits.

Unlike on server platforms, the AVX Offset register is open for adjustment on Broadwell-E processors, which makes it possible for us to define the AVX workload ratio. The adjustment option is certainly welcome. However, the mandatory condition is that we have to leave processor voltage in Auto mode to reduce operating temperatures under AVX workloads, and this has implications for overclocking – more on this later.

Another brand-new feature that aims to enhance single-threaded performance for Broadwell-E is what Intel refers to as the favorite core. The favorite core is determined during the production process and is touted to have the best overclocking potential. The ability to apply an overclock to each physical processor core independently is not new, but this is the first time Intel are making official reference to the best core on the die.

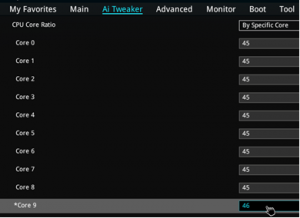

The favorite core is highlighted by an asterisk in UEFI

The favorite core is highlighted by an asterisk in UEFI

And there’s good reason for that. Previously, per-core overclocking had limited use; this is because the Windows operating system is configured to balance loads across all physical and logical processors, rather than execute applications on specific cores. There are, of course, ways of manually assigning applications to a given core by setting affinity via Windows Task Manager, but for most users, doing so is a chore.

That is where things become interesting because Microsoft’s upcoming Redstone update for Windows 10 seamlessly embraces the assignment of applications to specific cores. That update is not here yet, so rather than make us wait, Intel provides a Turbo Boost Max Technology 3.0 driver for Broadwell-E that adds this functionality to current versions of Windows. In theory, this should provide a method of utilizing multi-core compute power for applications that need it, without hindering overclocking headroom for light, non-threaded workloads.

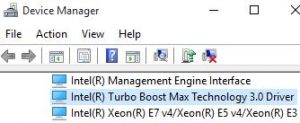

Intel’s new Turbo Boost driver locks applications to specific cores

So how well does this work in practice? At stock processor frequencies, quite well. As an example, the deca-core i7-6950X’s stock frequency is 3GHz when running multi-core/threaded workloads. When a single-threaded application is run, it is assigned to the favorite core, which ramps its ratio to run at 4GHz. That is a considerable boost for single-threaded application performance.

However, many of us are pre-conditioned by our overclocking experience on a previous platform, and from that perspective, our expectations are likely north of 4GHz for all cores, let alone a single core. And why shouldn’t they be? The Haswell-E architecture has proven to be capable of overclocked frequencies beyond 4.4GHz with water cooling. Average all-core frequencies land in the range of 4.5GHz for most samples, so an upgrade is not enticing unless Broadwell-E processors overclock to similar frequencies and/or are more efficient per clock cycle. Most of the time, we expect both criteria to be met, which is not exactly realistic, but that is just how our logical minds, illogically work.

Internal tests show that an i7-6950X overclocked to 4.3GHz is faster during encoding tasks than an i7-5960X running at 4.5GHz by a good margin, which settles the per-clock performance debate. However, there are a few items worth noting at this point; not all i7-6950 CPUs are capable of 4.3GHz, easily. Average overclocked frequencies – for all cores – are likely to be between 4~4.2GHz. While that is enough performance to topple Haswell-E’s frequency advantage in multi-core workloads, it will fall short of doing so in certain single-threaded applications. Before we get too hung up on that, remember that we do have a potential lifeline in the form of individual ratio control and Intel’s new driver, which can be utilized to overclock the CPU’s best cores beyond the “all cores loaded” frequency limit and assign applications to specific cores.